|

This means that the rank at the critical point is lower than the rank at some neighbour point. To clarify the equality, compare this article to the formula that follows after quote: 'Linear approximations for vector functions of a vector variable are obtained in the same way, with the derivative at a point replaced by the Jacobian matrix.

For example, the existence of all directional derivatives at a point does not imply continuity.

If f : R n → R m is a differentiable function, a critical point of f is a point where the rank of the Jacobian matrix is not maximal. begingroup DanielsKrimans For function mathbb R to mathbb R derivative and differentiabilty are equivalent concepts, but for function of several variables things are more complicated and less intuitive. If f is differentiable at a then L is a good approximation of f so long as x is. It asserts that, if the Jacobian determinant is a non-zero constant (or, equivalently, that it does not have any complex zero), then the function is invertible and its inverse is a polynomial function. The (unproved) Jacobian conjecture is related to global invertibility in the case of a polynomial function, that is a function defined by n polynomials in n variables. In other words, if the Jacobian determinant is not zero at a point, then the function is locally invertible near this point, that is, there is a neighbourhood of this point in which the function is invertible. These will be used in the tangent approximation formula, which is one of the keys to multivariable calculus. In single variable the derivative is the best linear approximation of the function, so I guess this extends to multivariable but we cant use a number for this (why) and instead we use a matrix. Following this we will study partial derivatives. I cant understand the concept of linear transformation that we use to define the Frechet derivative. Then the Jacobian matrix of f is defined to be an m× n matrix, denoted by J, whose ( i, j)th entry is J i j = ∂ f i ∂ x j I saw relative posts but one question remains. This function takes a point x ∈ R n as input and produces the vector f( x) ∈ R m as output.

Suppose f : R n → R m is a function such that each of its first-order partial derivatives exist on R n. By combining these disciplines into one course, we show important relations between each, which allows us to use results from one topic to gain deeper understanding of other topics. Both the matrix and (if applicable) the determinant are often referred to simply as the Jacobian in literature. This is the first half of an integrated treatment of linear algebra, real analysis, and multivariable calculus. When this matrix is square, that is, when the function takes the same number of variables as input as the number of vector components of its output, its determinant is referred to as the Jacobian determinant. We will do this in both unconstrained and constrained settings.In vector calculus, the Jacobian matrix ( / dʒ ə ˈ k oʊ b i ə n/, / dʒ ɪ-, j ɪ-/) of a vector-valued function of several variables is the matrix of all its first-order partial derivatives. Our main application in this unit will be solving optimization problems, that is, solving problems about finding maxima and minima. To help us understand and organize everything our two main tools will be the tangent approximation formula and the gradient vector. Math > Multivariable calculus > Applications of multivariable derivatives >. Of course, we’ll explain what the pieces of each of these ratios represent.Īlthough conceptually similar to derivatives of a single variable, the uses, rules and equations for multivariable derivatives can be more complicated. Vector form of multivariable quadratic approximation. Said differently, derivatives are limits of ratios. Partial Differentiation and Applications >.

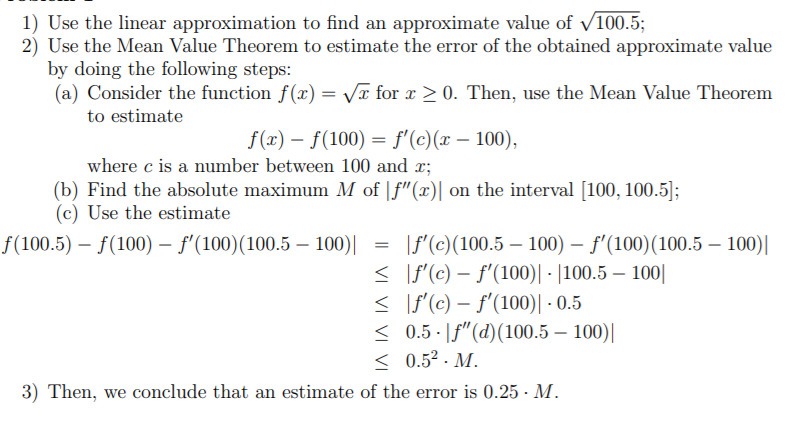

They help identify local maxima and minima.Īs you learn about partial derivatives you should keep the first point, that all derivatives measure rates of change, firmly in mind.So, the definition you proposed completely fails to capture the idea of 'approximating a non-linear function by a linear one', which is why we do not use it. They are used in approximation formulas. The fundamental notion is that of 'linear approximation' while the concept of partial derivative should only come afterwards. Recall that, in the CLP-1 text, we started with the constant approximation, then improved it to the linear approximation by adding in degree one terms, then improved that to the quadratic approximation by adding in degree two terms, and so on.Conceptually these derivatives are similar to those for functions of a single variable. In this unit we will learn about derivatives of functions of several variables.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed